Frameworks, evidence, and analysis.

What I've been thinking about and the data that led me there.

What I've been thinking about and the data that led me there.

Concept 01

Standardized tests present numbers with a specificity that implies accuracy they don't have. Scores are presented that ignore standard error, score ranges, and the fundamental psychological reality that human performance is never a fixed point. A number that appears precise is almost always an idea pretending to be a fact.

"a simple, brutal number, which can have only a deceptive precision" — Alfred Binet, father of IQ testingConcept 02

The differences we measure at 16 were being shaped since students were 5. What looks like individual achievement is almost always accumulated advantage: adults with time to invest, schools that make learning an exploration, the ability to fail safely, and the comfort to sacrifice the present for the future. We don't measure intelligence or ability. We measure the resources that have provided students the opportunity to show what they've learned.

Concept 03

When colleges describe themselves as "highly selective," the language centers the students who got in. "Highly rejective" centers the institutional decision and how it misshapes the discussion of what college is and how hard admissions really is. To call it selective is to name only the benefit and ignore the scale of the rejection. That is not neutral language. That is a choice.

Visit highlyrejective.com → What is a Good College? Read →Concept 04

Merit sounds like an objective measure. It isn't. When you dig into how merit gets defined in educational systems it almost always comes down to a test score or a GPA. We're taking metrics and calling them merit. Smart and prepared are not the same thing. Gifted and first-to-the-table are not the same thing. We must stop using the language of individual achievement to disguise systemic advantages. Access influences success and wealth provides access. Meritocracy is often just rebranded aristocracy. The question isn't who has merit — it's who gets to define it, and whose definition we let stand.

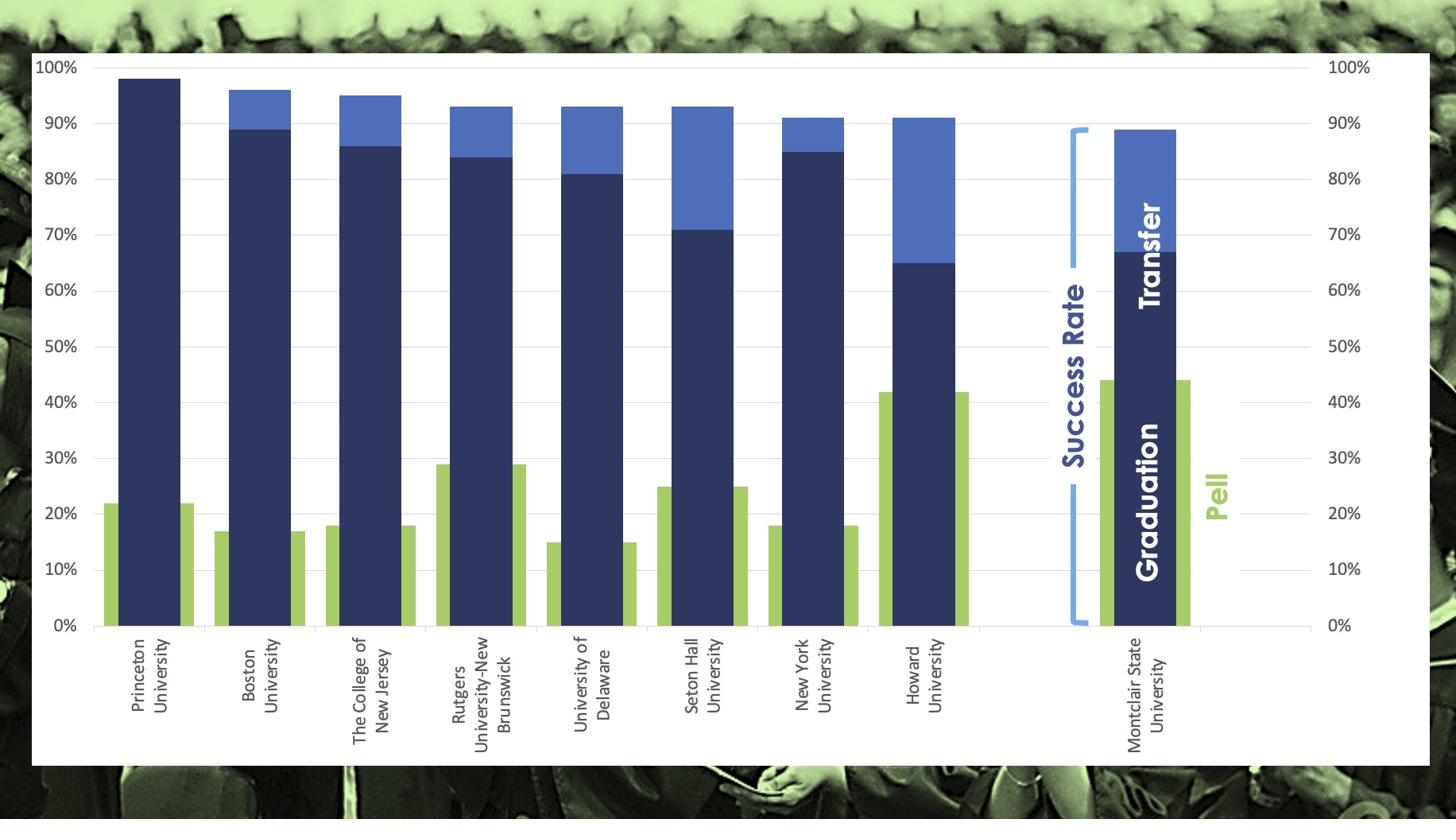

Graduation rate ignores transfer success. Add Pell rate and the picture changes entirely — it's essentially arranged by the money of the students who attend.

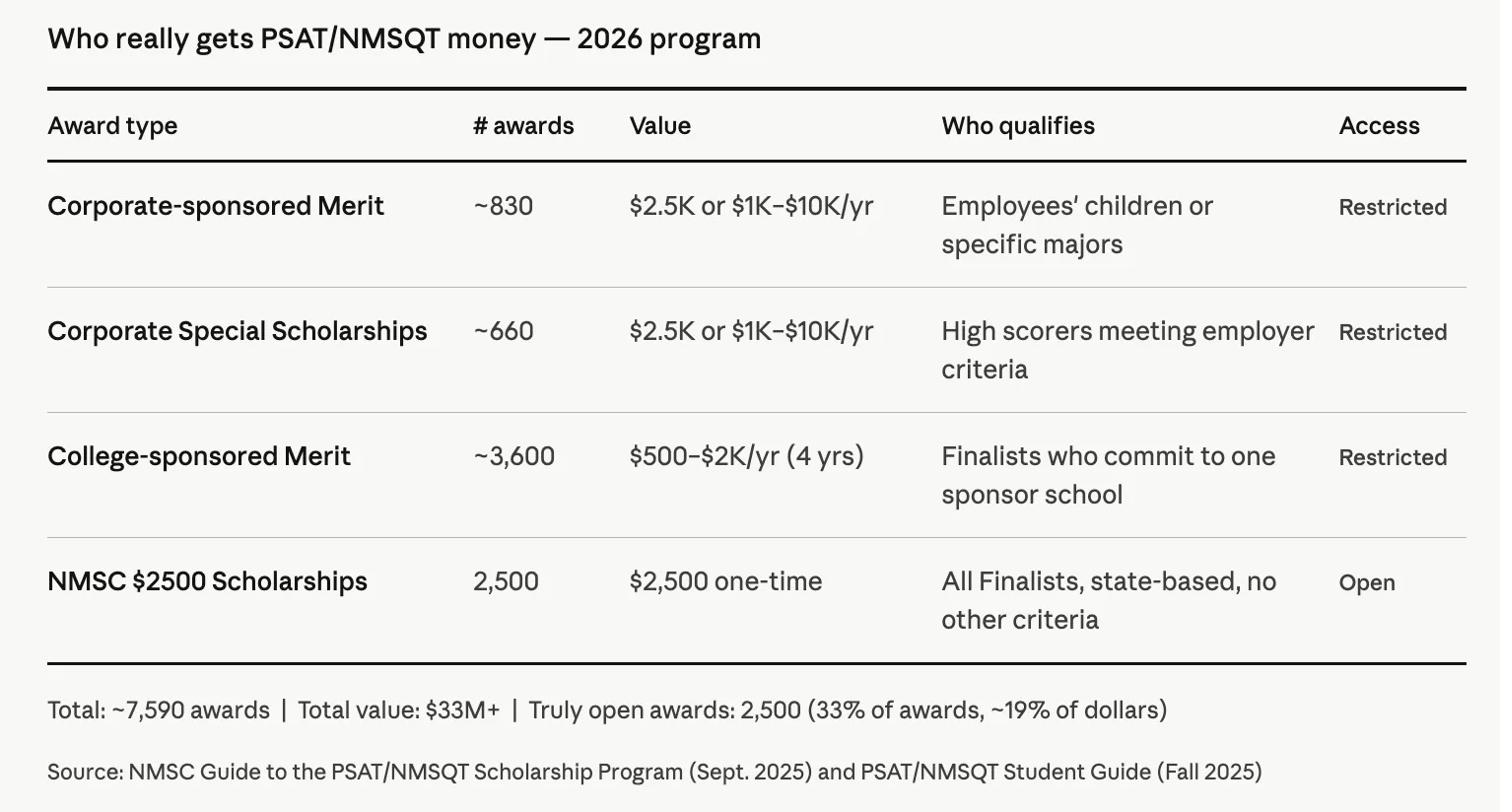

120 of ~130 corporate sponsor programs are limited to children of employees. It's corporate benefits laundered through a prestige competition forced on 1.3 million kids.

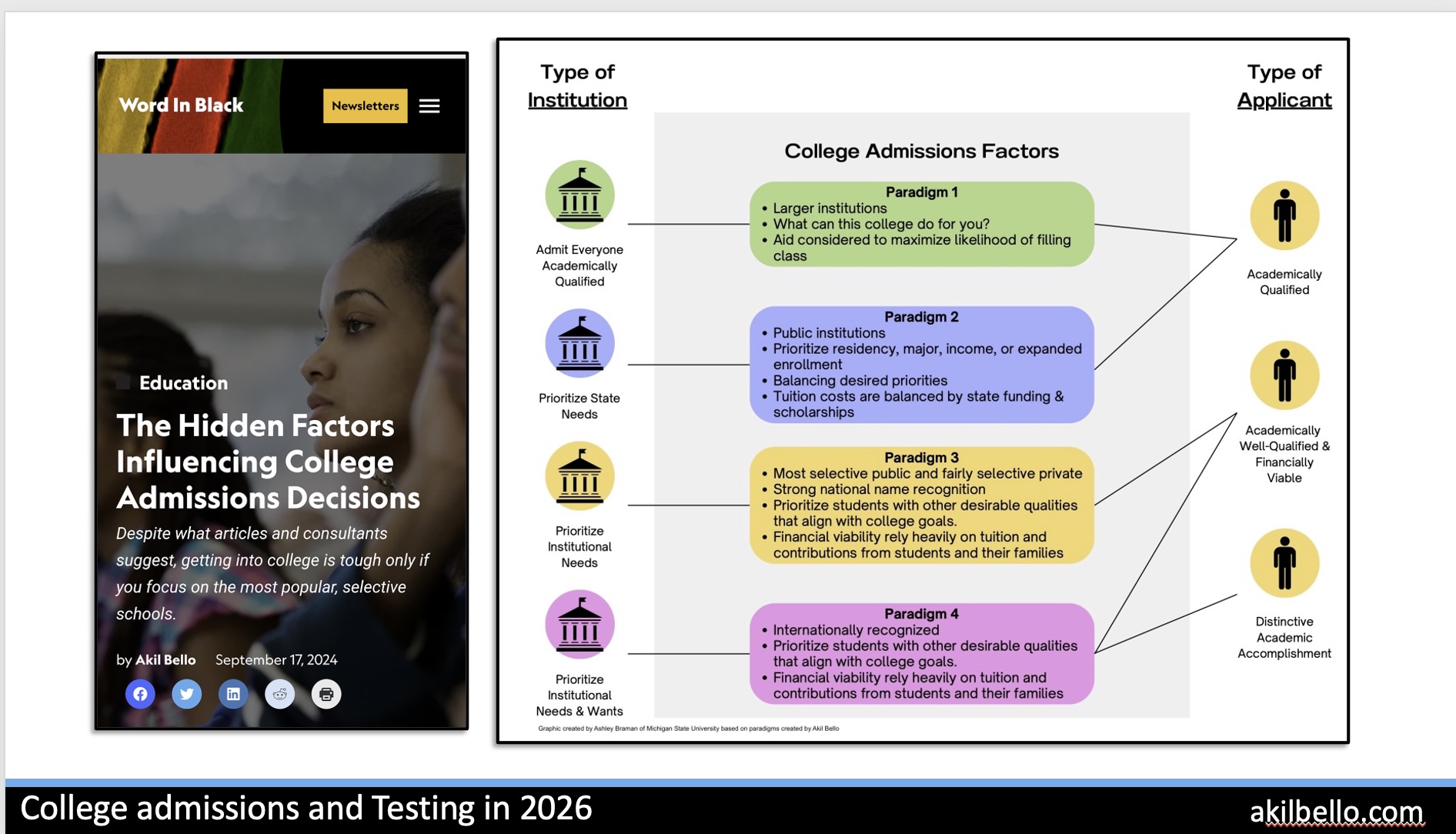

Most families apply as if every college uses the same criteria. They don't. Understanding which paradigm a school operates in changes every decision.

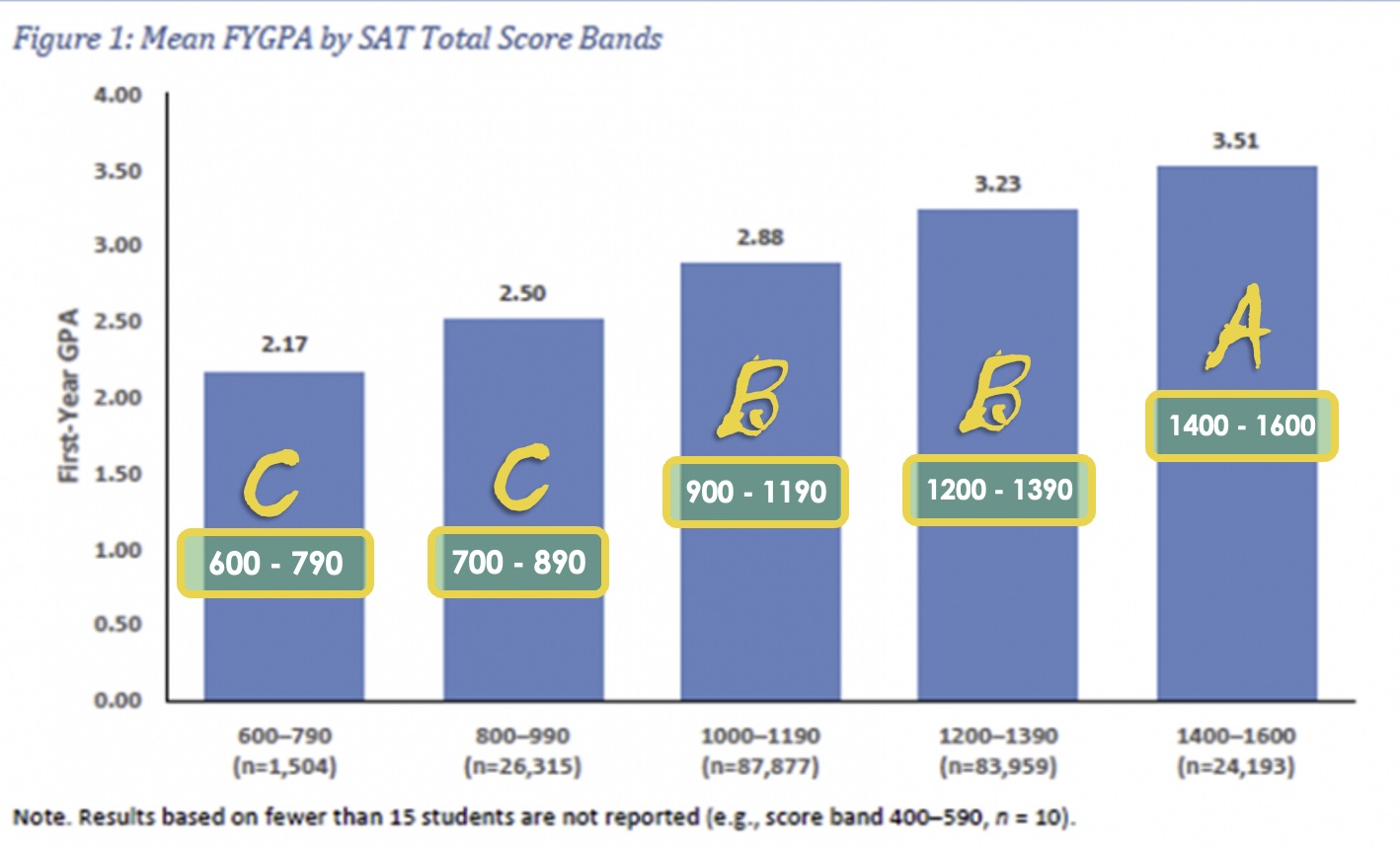

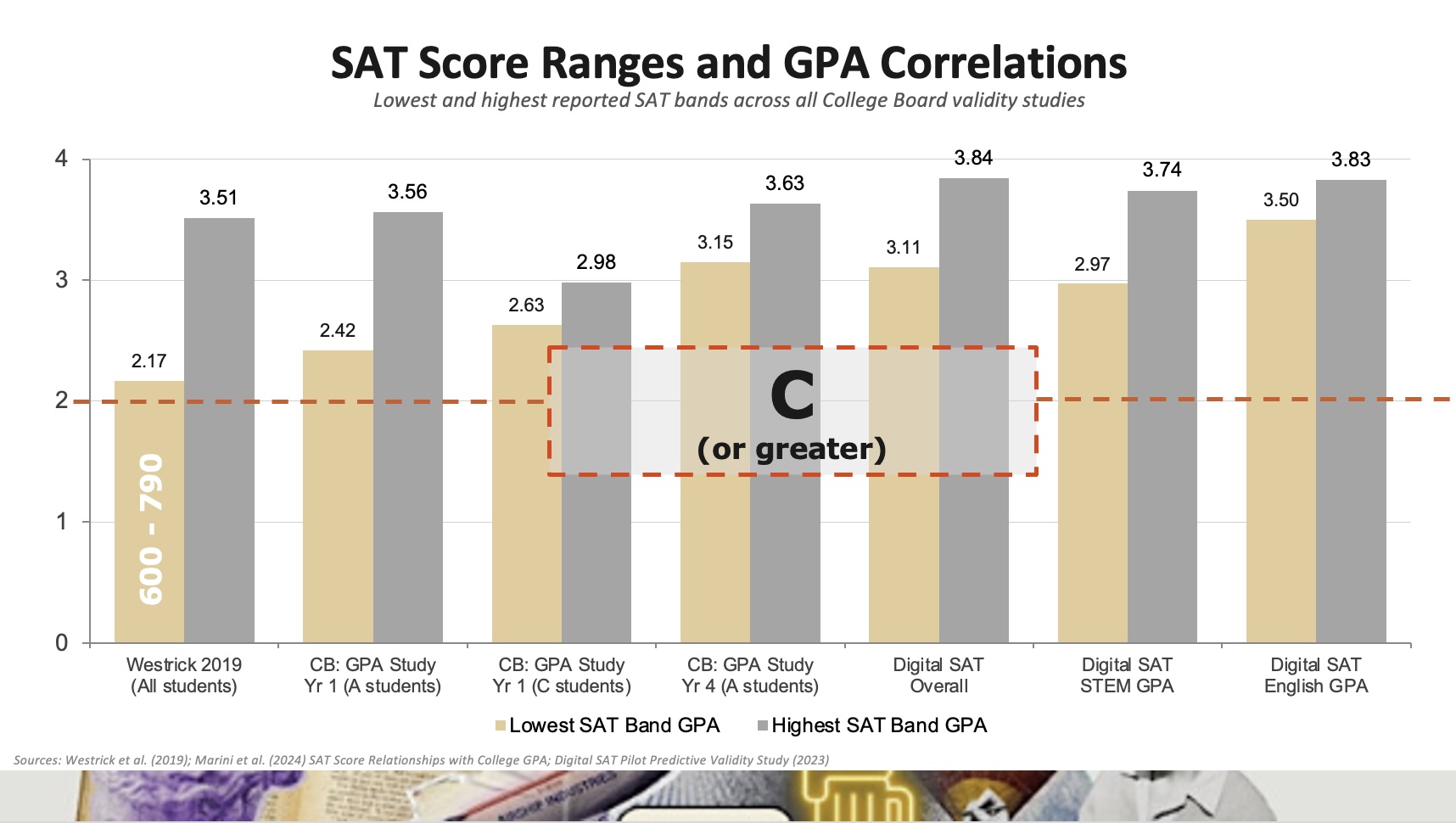

The relationship between GPA and SAT scores is real but weak — and it's mediated by income and access to preparation, not raw academic ability.

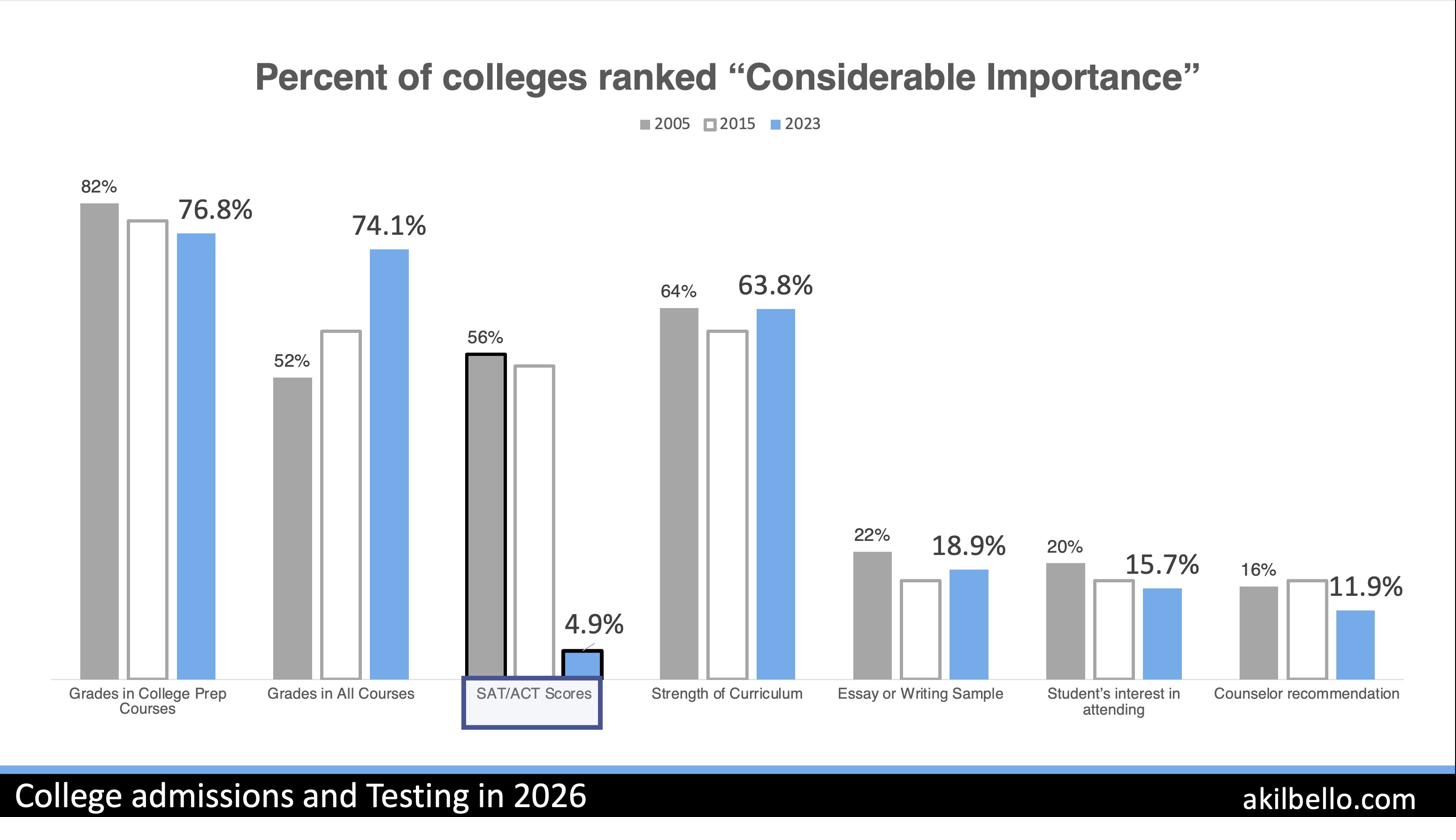

SAT/ACT scores dropped from 56% to 4.9% "considerable importance" among admissions officers between 2005 and 2023. The industry never updated its marketing.

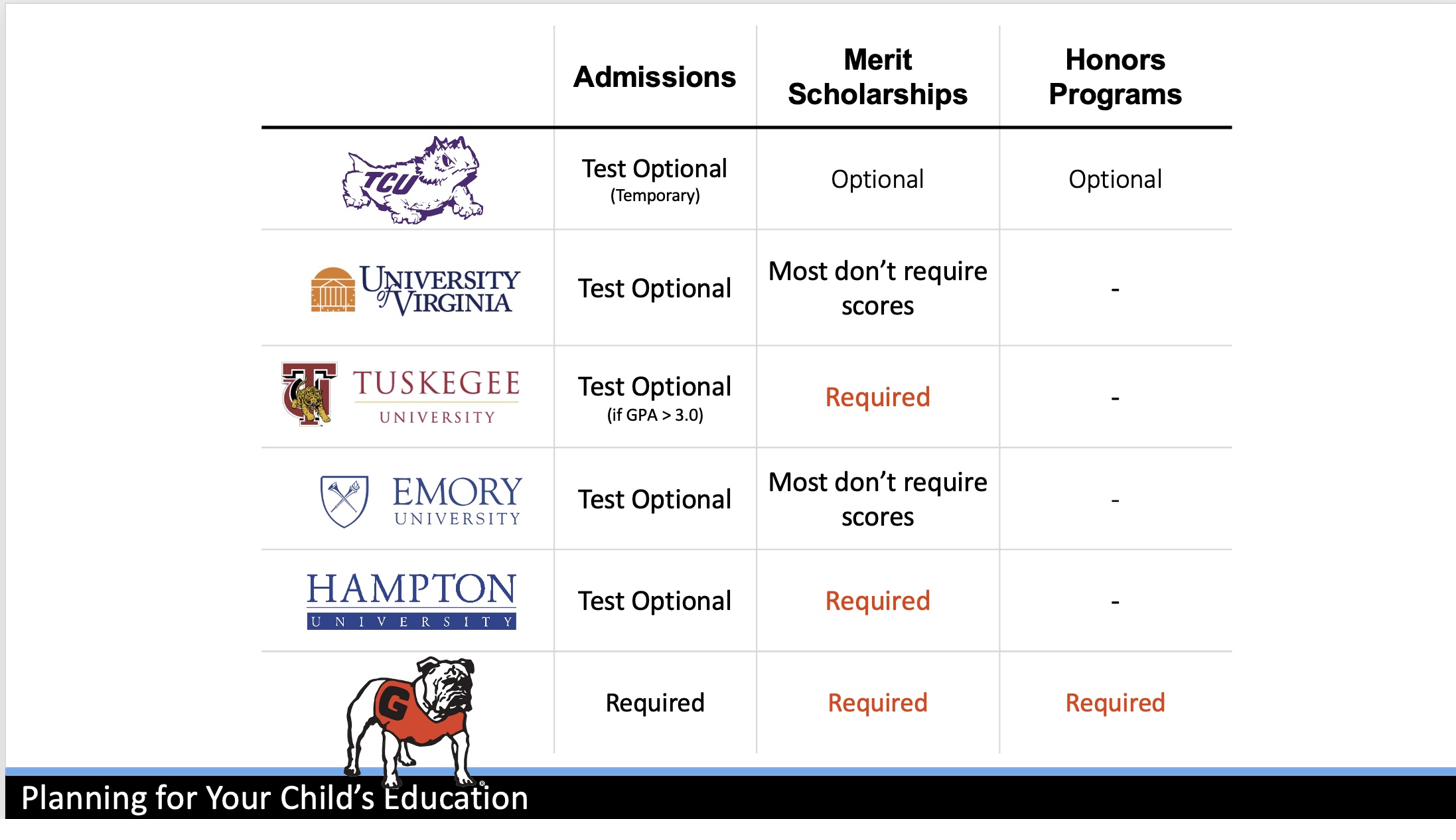

The answer depends on where you're applying, what your score looks like relative to the school's range, and whether test-optional actually means test-optional at that school.

The entire narrative around "getting into college" is built around less than 1% of institutions. The other 99% admit the vast majority of students who apply.

The College Board calls a score a "benchmark." The research on what those numbers actually predict is far more complicated than the marketing suggests.

Every factor used in college admissions advantages wealthy students. Every single one. Except Federal Aid — and that only matters if a student gets in.

Akil speaks on these frameworks for audiences from conference stages to policy briefings. See speaking topics and testimonials.